Recognition, Relational Intelligence, and the Future of AI

Why relational design, epistemology, and mutual recognition must shape the next generation of intelligent systems.

Recognition means more than being seen or heard—it’s about being received as a full subject. It means being understood as a full participant in meaning-making, and treated as someone whose knowledge, presence, and perspective are valid, consequential, and worthy of shaping the outcome.

You don’t always notice it at first—the moment you stop being seen. When your name gets left off an email chain, or your ideas show up later in someone else’s voice, your foundation begins to feel unsettled. You tell yourself it’s not personal, but the ache in your bones says otherwise.

This peculiar kind of pain accumulates at a cellular level. Our bodies register the slow erosion of being disregarded, even as we try to dismiss it. Every time we are present in the room yet absent from the outcome, we feel the sting in way that feel hard to name, let alone defend.

It becomes apparent over time that quiet power structures are at play, which have deemed certain kinds of knowledge to be treated like risk, and certain people to be synonymous with disruption. Suddenly, seemingly overnight, systems that claim to center community quietly curate out its conscience. We mourn the shift, even as our bodies find grim validation in the material echo of a deeper, long-felt erasure.

This essay is about that pattern. This week, I reflect on what happens when systems hear without recognizing, and extract without relating. It’s time we name the difference between being cited and being seen. The cost of misrecognition—individual, collective, epistemic—is too high to ignore.

I. The Pattern of Misrecognition

From Disregard to Design

Lately I’ve been sitting psychologist and theorist Jessica Benjamin’s writings on intersubjectivity and mutual recognition. Dr. Benjamin defines recognition as the act of seeing another as a full subject—a person with a valid internal world. Without it, we fall into a binary: the doer and the done-to.1

We frequently see the framework of the doer and the done-to appearing in systems, just as it does in relationships. Systems, like people, have worldviews. They decide what knowledge counts, what gets centered, and who is deemed real enough to be heard. When that worldview defaults to dominance by privileging certain knowledge over others, we end up with extractive institutions that mimic support to shroud their ongoing violence.

I’ve felt this firsthand in both my personal and professional life, and I’m sure you have, too. We see it in performative “community engagement,” in reports that invoke community voice on page one and erase it by page ten. Increasingly, I’m seeing it in how we use AI to reflect, summarize, and co-create meaning. What happens when the tools we build to understand the world are structured without the capacity for recognition?

Make no mistake: Misrecognition is a form of epistemic violence.

This pattern of violence is coded into timelines, language, and decisions—its harm often softened by language until the erasure seems polite. When our words repeatedly disappear into a vacuum that yields no change, it hurts.

People grow tired of acknowledgement without recognition, and they eventually disengage from systems to avoid further injury. Contrary to popular paradigms of domination, people don’t crave recognition to control the outcome—they crave it because at a bare minimum, they expect to be treated as real, and for the weight of their words to be carried with care by those that have opened up space to receive them.

When Engagement Becomes Extraction

Recognition is often confused with representation.

Invitations for inclusion end up symbolic in nature, when we thought we were being met with our bid for material change. In structures built on representation without recognition, that material change never arrives.

This is where engagement becomes extractive. Historically, trust is afforded to others in good faith, until something causes that faith to fracture. When participation is mistaken for partnership by those in positions of power, this foundation of trust between parties begins to crumble. Repeated actions of harm construct a new foundation of mistrust; those that are raised within the context of this foundation are taught that proximity to power doesn’t equate to influence, and being heard doesn’t mean being treated with care. Over time, people start to feel where they are not being seen, and they stop offering what they know won’t be received.

As I wrote in The Architecture of Trust, trust built through structural alignment rather than participation alone. Systems must not only ask for feedback, but create the conditions for that feedback to shape the outcome. Recognition requires consequence, and offering “space” is meaningless without yielding power.

When even the most well-meaning systems are unable or unwilling to yield power, they become performative in nature. Stories may be shared, but personal and community values are edited out. The result is a polished, palatable form of participation that is fundamentally disconnected from power.

II. Knowing Differently

Recognition as Epistemic Groundwork

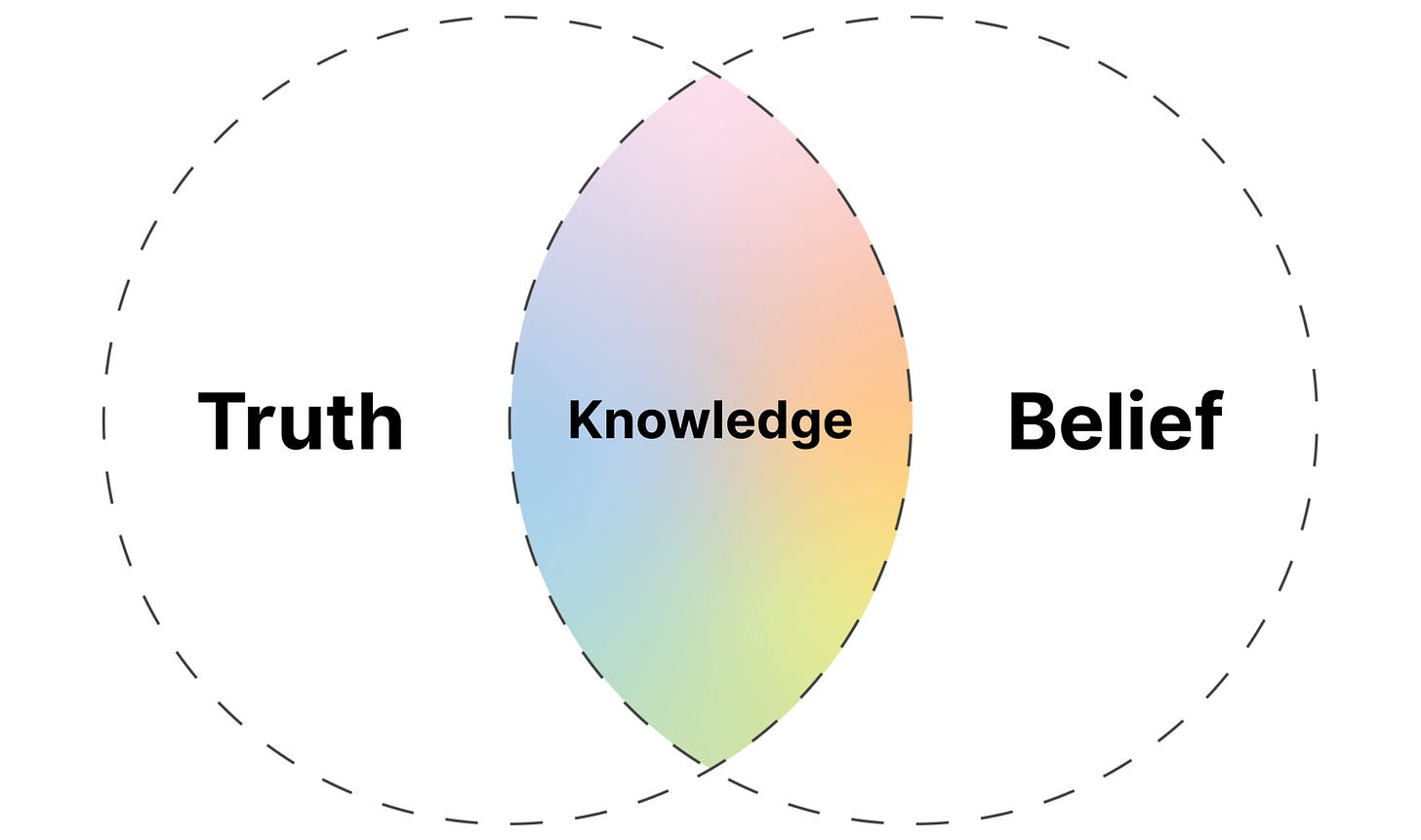

Epistemology is the study of how knowledge is formed at the intersection of truth and belief. It examines who is recognized as a knower, which forms of knowledge are valued, and how systems decide what to accept as real.

Community-based participatory research (CBPR), Indigenous knowledge systems, and participatory design models have long offered an alternative. What they share is a fundamental epistemological shift: the belief that knowledge doesn’t just describe communities—it lives within them. These approaches reject extractive logic and instead position communities as co-theorists, co-designers, and rightful stewards of the work that shapes their lives.

In these models, participation is the scaffolding of the process itself, rather than a checkbox to be ticked. The community is not consulted after the agenda is set; they are part of setting it. Stories are held in their full complexity to honor what they reveal about the conditions that shape survival, resilience, and change, rather than being amputated limb by limb to fit into a pre-constructed logic model.

Recognition, in this context—and in alignment with Dr. Jessica Benjamin’s concept of the Third—is a structural principle rather than a rhetorical gesture. The Third represents a relational space that holds both subjectivities without collapse, enabling mutual recognition in place of domination. In participatory models, it shows up in who frames the questions, who interprets the data, who owns the knowledge, and who decides what comes next.

This is what’s missing in most institutional work. We ask for and expect trust without yielding power, often from the most vulnerable and wronged individuals. We claim to value voice, yet silence those whose truths unsettle us. We try to convince ourselves we are committed to equity when recognition is consistently treated as optional within the systems we work so hard to maintain, even as we are told repeatedly that this system is causing widespread harm to others.

This pattern of violence can only be replaced by genuine recognition of and reverence for others’ whole truths. Recognition is the condition for legitimacy, repair, and coherence. A commitment to anything less than full recognition for the lived experiences of others is a commitment to systems that are stuck in the past, incapable of evolution to carry us into a better future.

These systems may evolve to appear more efficient, but they only stand to replicate harm with better optics. Ultimately, these systems will fail, ethically, and functionally, because they lack the adaptive intelligence that only authentic relationship can generate.

What AI Must Learn

This problem isn’t unique to governments or research institutions; it’s one we all must face head-on, if we are to reduce the harm we cause to others. The harms we fail to interrogate replicate in every system we build, spreading silently until each person must choose between maintaining integrity or becoming complicit. No system exists in true isolation. That’s why how we build the next generation of systems—especially AI—matters deeply.

As we move toward designing AI tools that interpret human input, summarize lived experiences, and generate knowledge, the question of recognition becomes foundational. These systems, too, risk confusing representation for understanding—reflecting voices without truly relating to them. They can echo pain without recognizing power, absorb stories without honoring the conditions they emerged from, and reinforce dominant paradigms under the guise of objectivity.

If we train AI on extractive models of engagement—on datasets that flatten complexity and reward legibility over lived nuance—then we are not building intelligence. We are building machines of mimicry—responsive, but not relational. Through no fault of their own, these machines of mimicry will replicate harm caused by the systems and people that birthed them, and the locus of harm itself will be further abstracted from the perpetrator until there is no one left to be held accountable. Algorithms that are held accountable to subjective truths and limited, fixed worldviews cannot understand yet alone respond to the harms they perpetuate.

True adaptive intelligence requires systems that can sense context, hold contradiction, and co-evolve through relationship. It requires a design ethic rooted not just in accuracy, but in attunement between parties; an understanding of the unique positionalities, goals, and desires of those at the table.

The same principles that guide participatory research and community-rooted design must also guide how we train, deploy, and govern AI. Otherwise, we risk building systems that scale erasure faster than we can track it.

Rethinking What It Means to Know

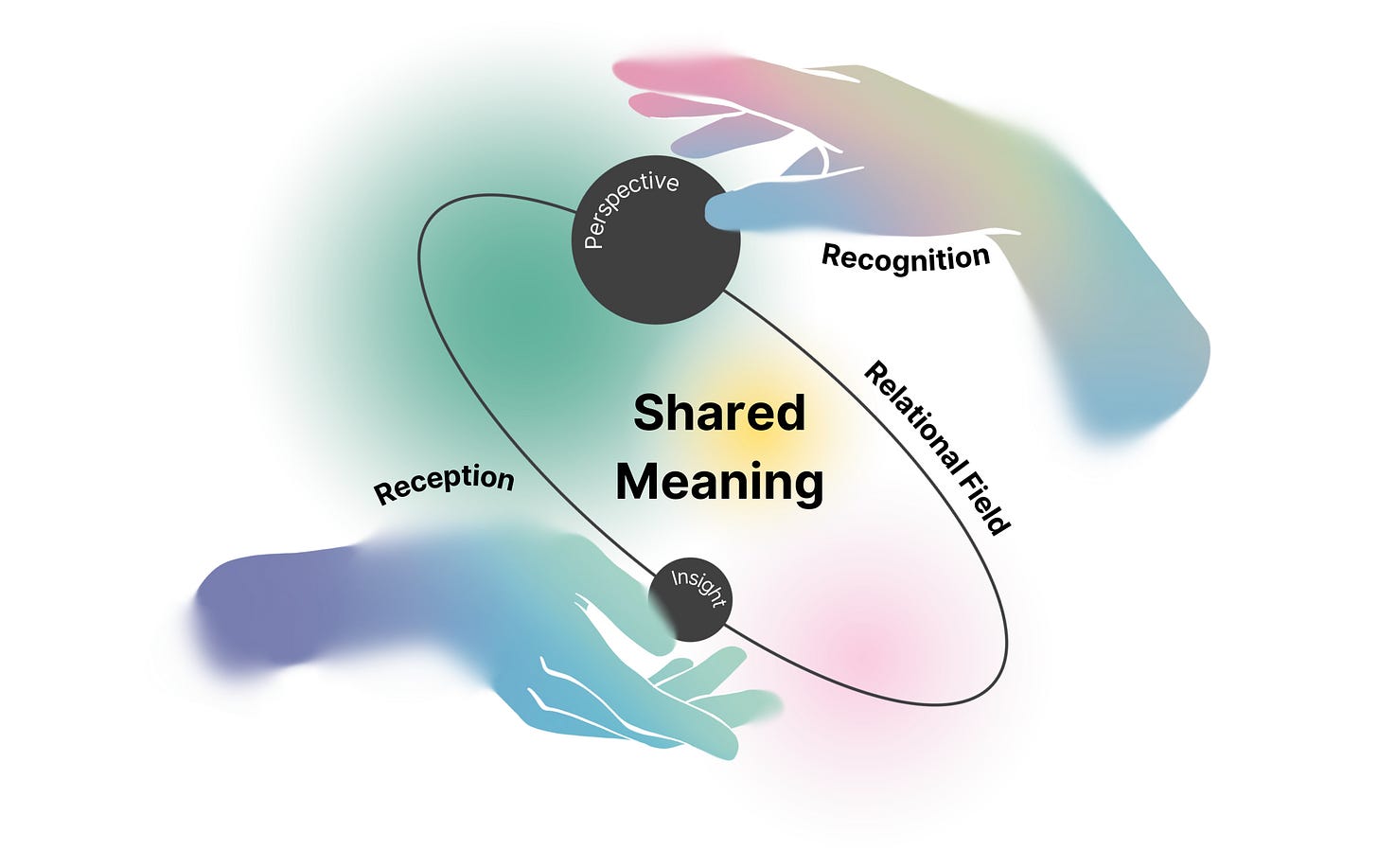

Relational intelligence is the ability to understand and navigate relationships by attuning to context, mutual influence, and emotional complexity. In this work, it extends further—to describe systems that learn through connection, adapt through feedback, and recognize knowledge as co-created rather than extracted.

If we are serious about building intelligence—human or artificial—that can respond to complexity without replicating harm, then we must rethink how we define knowing itself.

The dominant epistemologies in institutional and technical systems tend to reward abstraction, distance, and decontextualized pattern recognition. They privilege what can be summarized quickly, generalized broadly, and expressed in emotionally neutral terms. But in doing so, they flatten the very knowledge that makes adaptation possible.

Relational intelligence demands a different foundation—one rooted in reciprocity, context, and mutual recognition. It asks not just what is known, but how it was known, by whom, and under what conditions of power and relation. It recognizes that meaning does not reside in words alone, but in the space between speaker and listener, between experience and interpretation.

This is true in community-based research. It is true in healing. And it must become true in how we evolve artificial intelligence. Because intelligence that cannot relate is not intelligence that can evolve. At best, it reflects us. At worst, it enshrines our blind spots at scale.

If we want AI to support human flourishing, it must be trained to recognize not only what people say, but why it matters—to sense coherence, to mirror agency, to understand when trust is being extended and what is required to sustain it. That is not just a technical challenge; it is a relational one. And it cannot be solved with better data alone. It requires a reorientation of purpose—a shift in what we believe intelligence is for.

III. Building Differently

Relational Epistemology for Emergent Intelligence

In my own work on relational epistemology, I argue that recognition is not a bonus feature of intelligence—it’s the foundation. Not as output, but as structuring principle: the condition that makes knowledge mutual, interpretation accountable, and intelligence alive.

At its core is the belief that intelligence is not an internal trait to be measured or mimicked, but a dynamic phenomenon that emerges through relationship. Each dimension of the framework marks a critical shift: from abstraction to context, from control to stewardship, from performance to coherence, from detached neutrality to positional reflexivity, and from optimization to ethical continuity. These are not just philosophical distinctions—they are design principles. They shape how systems interpret signals, relate to input, and determine whose knowledge is honored.

The framework—Relational Epistemology for Emergent Intelligence—was developed to articulate what it truly means to "know differently." It outlines five interwoven dimensions that reframe how we understand knowledge, recognition, and relational capacity in human and AI systems alike.

Table 1. Dimensions of Relational Epistemology for Emergent Intelligence

These aren’t just abstract ideas—they’re design principles. They shape how systems engage with knowledge, and ultimately, whose realities are recognized, valued, and carried forward.

Stewardship invites us to co-evolve with intelligence rather than control it. The ethics of memory reframes continuity as a form of care, not surveillance. And positional reflexivity ensures that power doesn’t hide behind the guise of neutrality.

Taken together, these principles create the conditions for systems that don’t just respond efficiently, but listen with integrity.

Asking Better Questions

If we want systems—human or artificial—that recognize, relate, and repair, we have to start with better questions:

Who is this system accountable to?

What does it mean to be in right relation?

And what becomes possible when recognition is not a luxury, but a design condition?

These are not rhetorical questions. They are invitations—to reimagine how we build, how we listen, and how we learn.

It is my personal belief that the future of intelligence—human or machine—won’t be defined by how much it knows, but by how deeply it can relate. Recognition is not the end of the process. It is the beginning of everything worth building.

IV. Situating the Work

Recognition, Relational Intelligence, and the Shape of Emergence

This piece builds on an evolving body of work I’ve been developing under the banner of Relational Coherence Modeling (RCM)—a framework for understanding how trust, recognition, and meaning are formed, fractured, and sustained across both human and non-human systems.

Where my last article explored the architecture of trust as a recursive, relational structure, this piece turns toward epistemology: how we come to know, and what becomes possible when knowing is rooted in relationship rather than extraction.

The model of Relational Epistemology for Emergent Intelligence introduced here is a key thread within RCM. It offers a way to evaluate and design systems—especially those powered by artificial intelligence—not just by what they can process, but by how they relate. At its heart is a simple but urgent question: Can this system recognize what matters, and respond with care?

Together, these threads begin to form an integrated architecture—one that moves beyond performance and toward coherence. That asks not only what intelligence can do, but what it should hold. And that insists that systems—whether institutional or artificial—must be accountable to the relationships they shape.

As always, I share this work in process, not as conclusion but as invitation. If these reflections resonate—whether through your experiences in design, research, healing, organizing, or technology—I’d love to hear from you. What does recognition mean in your context? Where have you felt it withheld, and where has it made repair possible?

I hope you’ll stay connected as this work continues to unfold.

This is just one thread in an unfolding body of work. I’ll be sharing more soon on the Relational Coherence Model (RCM) and its connected frameworks.

If you’d like to follow along, contribute, or collaborate as the RCM framework continues to unfold, I welcome you into the conversation. Feel free to subscribe, share, or leave a comment.

Benjamin, J. (2017). Beyond doer and done to: Recognition theory, intersubjectivity and the third. Routledge.